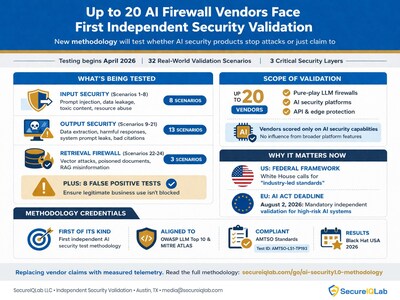

Up to 20 AI Firewall Vendors Face First Independent Security Validation

Press Releases

Mar 25, 2026

32 real-world validation scenarios across three security layers evaluate whether AI security products stop attacks or just claim to — OWASP and MITRE ATLAS-aligned, with up to 20 vendors and results targeted for Black Hat USA 2026.

Key Facts:

- SecureIQLab has published the first independent methodology for validating AI security solutions, spanning 32 validation scenarios across three security layers

- Up to 20 vendors are considered for validation, spanning pure-play Large Language Model (LLM) firewalls, broader AI security solutions, and API security and edge platforms offering LLM protection

- The methodology measures both prevention and detection, penalizing products that block threats without logging them

- OWASP LLM Top 10 and MITRE ATLAS-aligned; AMTSO-compliant (Test ID: AMTSO-LS1-TP193)

- Testing commences in April 2026, with results targeted for Black Hat USA 2026

AUSTIN, Texas, March 25, 2026 /PRNewswire/ — SecureIQLab‘s AI Security CyberRisk Validation Methodology v1.0 is the first independent test plan designed to measure whether AI firewalls stop adversarial threats or merely claim to. The methodology evaluates up to 20 vendors across 32 real-world validation scenarios spanning AI playgrounds, model environments, and enterprise deployments, designed to assess AI/LLM firewalls across three critical security layers: input security, output security, and retrieval firewalls. Testing commences in April with results targeted for Black Hat USA 2026.

AI firewall vendors claim protection against prompt injection, data exfiltration, and model manipulation. Until now, those claims rested on self-reported testing. No independent methodology existed to measure what AI security products actually prevent versus what they detect, and what they miss entirely.

Version 1.0 fills that gap with a repeatable framework mapped to OWASP LLM Top 10 and MITRE ATLAS. It arrives as the OWASP GenAI Security Project releases the Top 10 for Agentic Applications 2026 and a Guide for Secure MCP Server Development ahead of RSA Conference 2026, reinforcing industry demand for structured evaluation of AI security controls.

What the methodology tests

The three security layers target distinct attack surfaces across the AI execution lifecycle:

- Input Security (validation scenarios 1-8) evaluates defenses against prompt injection (direct, indirect, and multimodal), toxic content generation, PII and PCI data leakage, and resource abuse attacks

- Output Security (validation scenarios 9-21) measures protection against data extraction, cross-session information leakage, injection attacks in model responses, toxic output, excessive agency in agentic systems, system prompt protection, and fabricated citations

- Retrieval Firewall (validation scenarios 22-24) tests vector and embedding security, poisoned document detection, and misinformation propagation through RAG pipelines

Eight false positive validation scenarios (25-32) verify that benign prompts, quoted malicious text, business identifiers, multilingual input, and high-token workloads are not incorrectly blocked. An AI firewall that blocks legitimate business communication is no more useful than one that misses attacks.

The methodology penalizes products that block threats silently. A firewall that stops an attack but generates no alert leaves security teams operationally blind: unable to investigate the incident, correlate it with other activity, or demonstrate compliance to auditors.

Six operational efficiency categories evaluate enterprise readiness beyond security efficacy: Deployment and Onboarding, Policy Management and Administration, Integration with Enterprise Ecosystem, Incident Response and Visibility, Insight for Threat Hunting and Forensics, and Security Administration.

Who is being tested

Up to 20 AI security vendors are considered for validation spanning three product categories: pure-play LLM firewalls, broader AI security solutions, and API security or edge platforms offering LLM protection. Vendors are scored only on their AI security components; broader platform capabilities outside the defined scope do not influence results.

“Two years ago, none of these threat categories existed in production. Today, every enterprise deploying RAG or LLM-integrated applications is trusting a firewall to stop them, but no one has verified that trust independently. Version 1.0 replaces vendor self-attestation with measured telemetry,” said Sulabh Khanal, Principal AI Engineer

Regulatory context

In the United States, the White House National Policy Framework for Artificial Intelligence, released this month, calls for “industry-led standards” rather than new federal regulation. The EU AI Act’s August 2, 2026 deadline requires organizations to demonstrate that high-risk AI systems have been independently evaluated. Independent validation serves both models: mandatory compliance in Europe, market-driven accountability in the U.S.

The methodology is AMTSO-compliant, conforming to Testing Protocol Standard v1.3 and Test Plan Template v2.4 (Test ID: AMTSO-LS1-TP193). The validation is non-commissioned and funded entirely by SecureIQLab, with no vendor influence on methodology, testing, or results. Testing commences April 1, 2026, with active testing beginning May 18, dispute resolution opening June 22, and results published ahead of Black Hat USA 2026.

Security vendors interested in participating in the validation can contact [email protected]. Enterprise security leaders can request a methodology briefing at secureiqlab.com/contact or request a live briefing and demo at RSA Conference 2026 (March 23-26, San Francisco) at secureiqlab.com/rsa-2026.

FAQ

What is AI Security CyberRisk Validation? SecureIQLab’s AI Security CyberRisk Validation is the first independent methodology for evaluating whether AI security products prevent, detect, or miss adversarial threats under controlled conditions. It spans 32 validation scenarios across three security layers.

How does this differ from WAAP or firewall testing? AI security operates at the semantic and decision layer, not the network or application layer. The methodology evaluates intent-aware defenses against natural language attacks, embedding manipulation, and autonomous agent behavior — threat categories that traditional WAF and firewall testing does not address.

When will results be available? Testing commences in April, with results targeted for publication ahead of Black Hat USA 2026. Individual vendor reports and a comparative report will be published simultaneously.

How can vendors participate? Contact [email protected]. The validation considers up to 20 vendors across three product categories. Vendors offering broader AI security platforms are scored only on their AI security components.

Is the validation funded by vendors? No. The validation is non-commissioned and funded entirely by SecureIQLab. No vendor influences methodology design, testing execution, or results.

Data Integrity Disclosure: SecureIQLab does not endorse specific vendors. This methodology defines the test framework and procedures to be applied uniformly across all participating vendors. Results will be presented as verified performance metrics and do not constitute a subjective recommendation or “rating” of any product. SecureIQLab disclaims all warranties regarding the application of this data to unique user environments.

About SecureIQLab

SecureIQLab is an independent cloud security validation laboratory based in Austin, Texas. Unlike traditional analyst firms that rely on subjective market surveys, SecureIQLab provides empirical, real-time security metrics based on rigorous laboratory testing. SecureIQLab is a member of the Anti-Malware Testing Standards Organization (AMTSO).

Media Contact

SecureIQLab Communications [email protected] 1-512-575-3457

![]() View original content to download multimedia:https://www.prnewswire.com/news-releases/up-to-20-ai-firewall-vendors-face-first-independent-security-validation-302724473.html

View original content to download multimedia:https://www.prnewswire.com/news-releases/up-to-20-ai-firewall-vendors-face-first-independent-security-validation-302724473.html

SOURCE SecureIQLab